Let's see how a simple basic search operation works. In the above inverted index table, we can see there are 4 terms, and the documents in which they occur and the frequency of the terms occurring listed. Here we generally refer the unique words occurring in the documents as "terms". So our inverted index generated for the above two documents would like below. After then we create the lists of unique words and the document ids in which they occur and also the word frequency list. Now to create the inverted index for the above two documents, we split the contents of each document into separate words. In order to get a good grasp of how an inverted index is populated, we will consider two documents to be indexed with the following contents in it. It is a very versatile, easy to use and agile structure which provides fast and efficient text search capabilities to Elasticsearch.Ī list of all the unique words, called terms, that appear in any documentĪ list of the documents in which the words appearĪ term frequency list, which shows how many times a word has occurred Is handled and processed by elasticsearch.Įlasticsearch employs Lucene's index structure called the "inverted index" for its full-text searches. In graphical user interfaces, users can typically press the tab key to accept a suggestion or the down arrow key to accept one of several.Įlasticsearch is a search engine based on Lucene.We will be using differentĪpproaches based on the features provided by search Engine.įirst lets have a look on algorithm used by elasticsearch to learn how test As there is no model mapped to this index, we won't create a provider but a command.Autocomplete, or word completion, is a feature in which an application predicts the rest of a word a user is typing. As this point, we now have two indexes in the Elasticsearch cluster: If we launch the populate command, the new index is created. We can see that we have added a context to the suggest field and it's associated with the locale property ( path: locale).

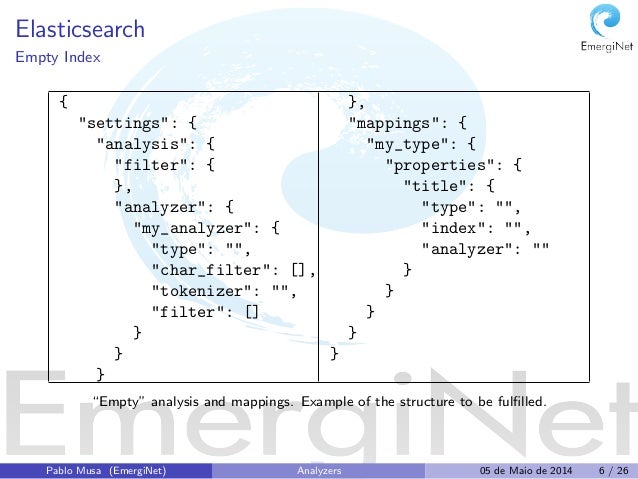

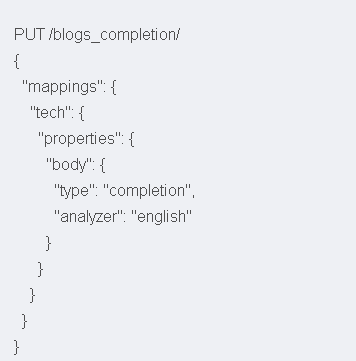

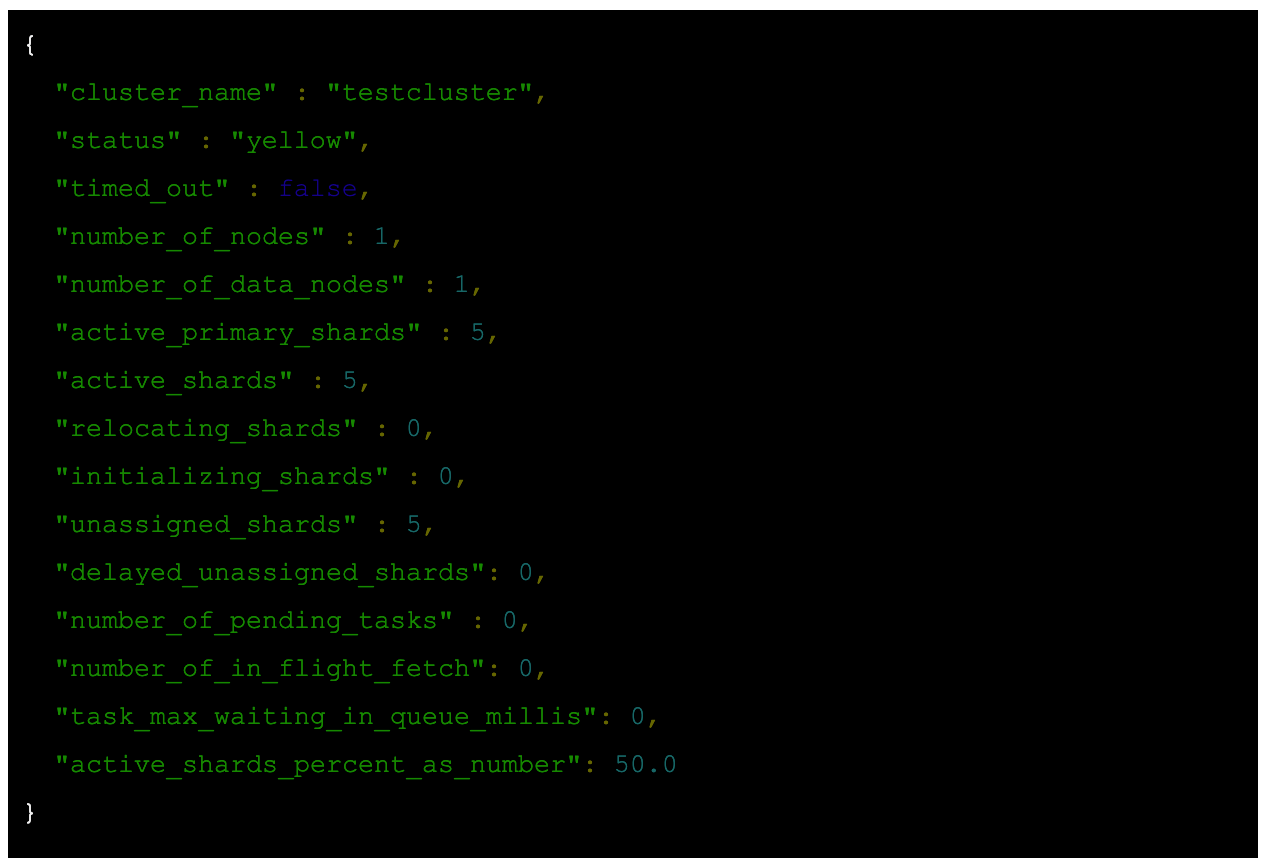

And we have a second property locale that will allow us to filter the suggestions depending on the current locale (en or fr). We need this particular type to be able to use the completion suggester as we will see in the next chapter. In the mapping, we have two properties: first, the suggest of the completion types. Then, in the "type" section, we also use an alias as the main app index. For example, if we type: "element", the "élément" word should be suggested. This asciifolding filter will allow us to ignore french accents to make the match even accents are not used in the input. Some explanations about the new index and its mapping: before declaring the new type, I add a custom analyser in the setting section. # L1->L29 snippet in templates/blog/posts/_48.html.twig Service: App\Elasticsearch\Provider\ArticleProvider # Run "composer require symfony/mercure-bundle" to install and configure the Mercure integration MERCURE_URL: $ĬontentEn: ~ # The default boost value is 1 # Snippet L55+12 in templates/snippet/code/_ "9309:9300" # Important if you have multiple es instances running # image: /elasticsearch/elasticsearch:7.1. # image: /elasticsearch/elasticsearch:7.17.9 # - Elasticsearch -Ĭontainer_name: strangebuzz-elasticsearch-1 # Snippet L21+4 in templates/snippet/code/_ # Database -Ĭommand: -default-authentication-plugin=mysql_native_password elasticsearch Click here to view the new full docker-compose.yaml file. Let's add the following entry to the docker-compose.yaml file: Of course, it will allow us to do all the essential maintenance tasks we used to with head: delete, close an index, create, delete an alias, check a document, verify the index mappings and much more! The list of what you can do with it is impressive (check out at the left menu of the next screenshot). Kibana is an open-source data visualization plugin for Elasticsearch. So, let's add Kibana to our Docker setup. But this development tool is quite old and not maintained anymore.

Until now, we used the "head" plugin to manage our cluster. It is, of course, recommended to read them (links above) before continuing with this one.įirst, we will try to improve our Elasticsearch stack. The prerequisites are the same as the first two parts.

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed